How We Built Scene Composition

A technical deep-dive into our scene composition engine — how we handle lighting, perspective matching, and identity preservation across multiple input photos.

The problem

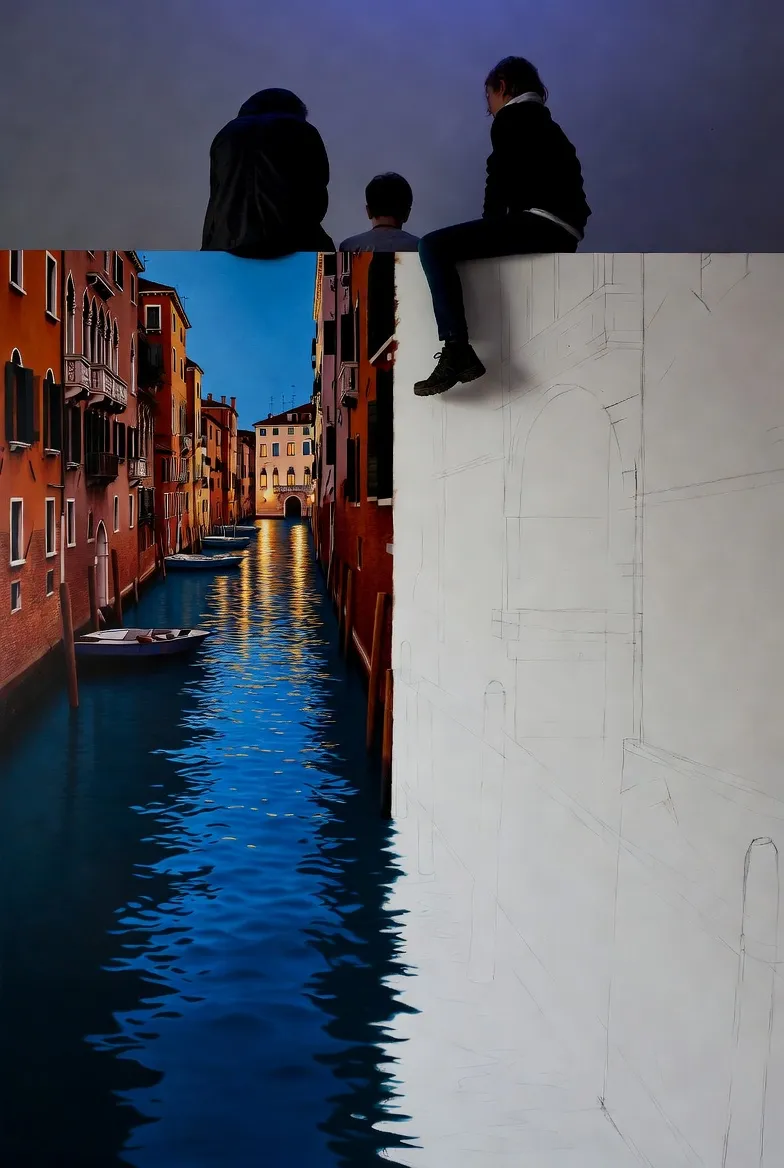

Scene composition sounds simple — take two photos and put them together. But doing it convincingly is extremely hard. The lighting has to match. Perspectives need to align. Skin tones, shadows, and reflections all need to be consistent. And if the subjects are people, their faces need to look like the original photos, not like slightly-off AI interpretations.

Architecture overview

Our scene composition pipeline has four stages:

- Face detection & encoding — Extract identity embeddings from each input photo using a fine-tuned face encoder.

- Scene planning — An LLM analyzes the user's description and the input photos to generate a detailed scene layout (spatial positions, lighting direction, camera angle).

- Conditioned generation — A ControlNet-based pipeline generates the scene, conditioned on identity embeddings, the scene layout, and the text prompt.

- Post-processing — Face refinement pass to correct any drift, color grading for consistency, and optional upscaling.

Identity preservation

The hardest part of scene composition is keeping faces accurate. We use a dual-encoder approach: a high-fidelity identity encoder captures the unique geometry and features of each face, while a style encoder captures skin tone, makeup, and lighting characteristics from the original photos.

During generation, both encoders provide conditioning signals at multiple layers of the diffusion model. This allows the model to generate faces that are both geometrically accurate and tonally consistent with the original photos.

Lighting estimation

When combining photos taken in different lighting conditions, naive compositing looks obviously fake. We estimate the lighting environment of each input photo using a spherical harmonics model, then re-light each subject to match the target scene's lighting.

For preset scenes (beach, café, park, etc.), we have pre-computed lighting environments. For custom descriptions, the scene planner estimates the most likely lighting based on the text.

Results & what's next

Scene composition is now one of our most-used tools, accounting for about 15% of all generations. Our internal quality benchmarks show 89% identity preservation accuracy — meaning 9 out of 10 generated scenes would be rated as "clearly the same person" by a human evaluator.

Next, we're working on video scene composition — the same multi-person compositing, but in motion. Early results are promising. Stay tuned.

Interested in working on problems like this? We're hiring.